Residential Proxies

Allowlisted 200M+ IPs from real ISP. Managed/obtained proxies via dashboard.

Proxies Services

Residential Proxies

Allowlisted 200M+ IPs from real ISP. Managed/obtained proxies via dashboard.

Residential (Socks5) Proxies

Over 200 million real IPs in 190+ locations,

Unlimited Residential Proxies

Unlimited use of IP and Traffic, AI Intelligent Rotating Residential Proxies

Static Residential proxies

Long-lasting dedicated proxy, non-rotating residential proxy

Dedicated Datacenter Proxies

Use stable, fast, and furious 700K+ datacenter IPs worldwide.

Mobile Proxies

Dive into a 10M+ ethically-sourced mobile lP pool with 160+ locations and 700+ ASNs.

Scrapers

Collection of public structured data from all websites

Proxies

Residential Proxies

Allowlisted 200M+ IPs from real ISP. Managed/obtained proxies via dashboard.

Starts from

$0.6/ GB

Residential (Socks5) Proxies

Over 200 million real IPs in 190+ locations,

Starts from

$0.03/ IP

Unlimited Residential Proxies

Unlimited use of IP and Traffic, AI Intelligent Rotating Residential Proxies

Starts from

$1816/ MONTH

Rotating ISP Proxies

ABCProxy's Rotating ISP Proxies guarantee long session time.

Starts from

$0.4/ GB

Static Residential proxies

Long-lasting dedicated proxy, non-rotating residential proxy

Starts from

$4.5/MONTH

Dedicated Datacenter Proxies

Use stable, fast, and furious 700K+ datacenter IPs worldwide.

Starts from

$4.5/MONTH

Mobile Proxies

Allowlisted 200M+ IPs from real ISP. Managed/obtained proxies via dashboard.

Starts from

$1.2/ GB

Scrapers

Web Unblocker

Simulate real user behavior to over-come anti-bot detection

Starts from

$1.2/GB

Serp API

Get real-time search engine data With SERP API

Starts from

$0.3/1K results

Scraping Browser

Scale scraping browsers with built-inunblocking and hosting

Starts from

$2.5/GB

Documentation

All features, parameters, and integration details, backed by code samples in every coding language.

TOOLS

Resources

Addons

ABCProxy Extension for Chrome

Free Chrome proxy manager extension that works with any proxy provider.

ABCProxy Extension for Firefox

Free Firefox proxy manager extension that works with any proxy provider.

Proxy Manager

Manage all proxies using APM interface

Proxy Checker

Free online proxy checker analyzing health, type, and country.

Proxies

AI Developmen

Acquire large-scale multimodal web data for machine learning

Sales & E-commerce

Collect pricing data on every product acrossthe web to get and maintain a competitive advantage

Threat Intelligence

Get real-time data and access multiple geo-locations around the world.

Copyright Infringement Monitoring

Find and gather all the evidence to stop copyright infringements.

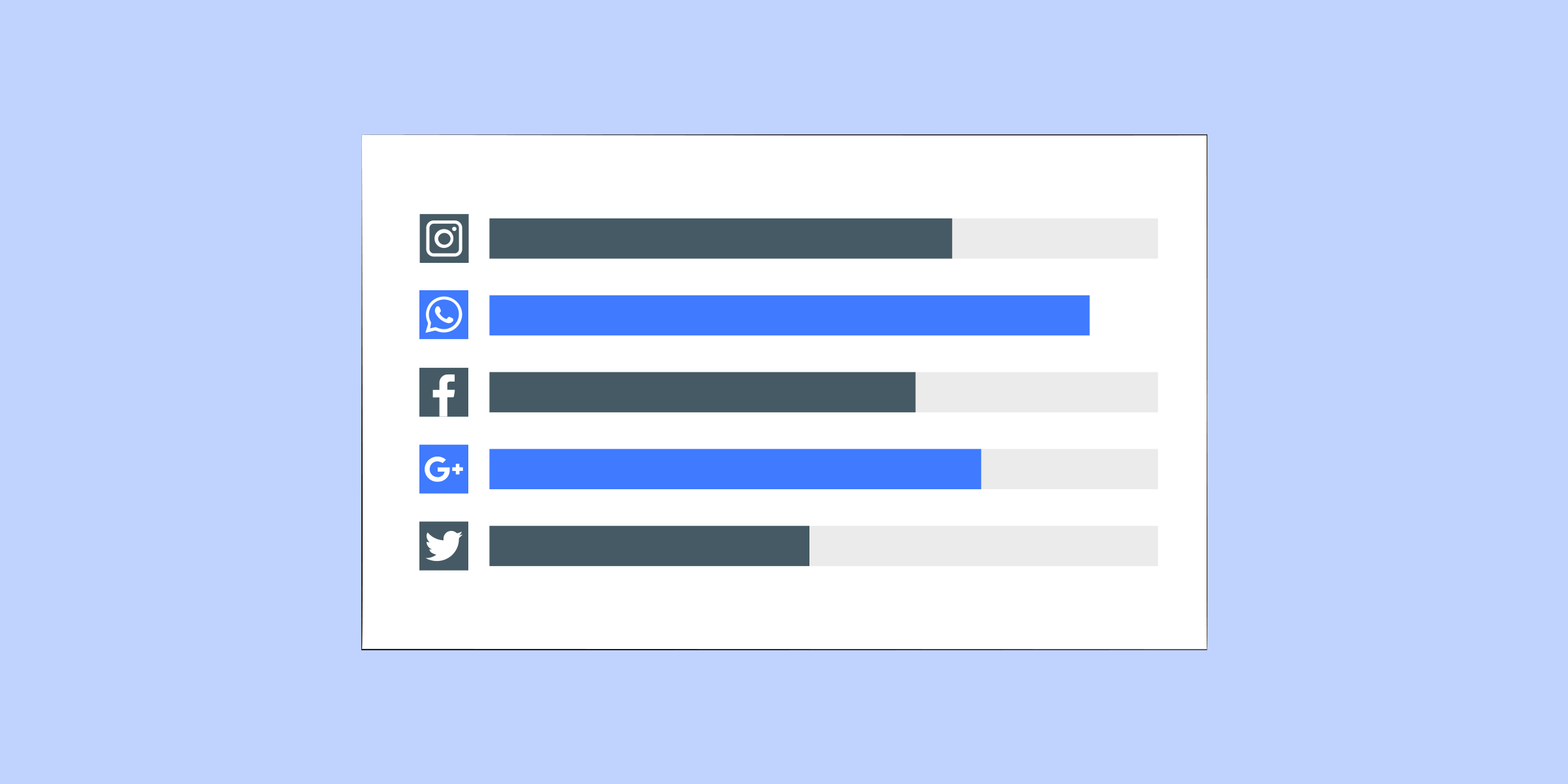

Social Media for Marketing

Dominate your industry space on social media with smarter campaigns, anticipate the next big trends

Travel Fare Aggregation

Get real-time data and access multiple geo-locations around the world.

By Use Case

English

繁體中文

Русский

Indonesia

Português

Español

بالعربية

Amazon, as one of the largest e-commerce platforms globally, offers a treasure trove of data for businesses and individuals interested in market research, price monitoring, and competitive analysis. Scraping Amazon can provide valuable insights, but it must be done carefully to comply with legal and ethical guidelines. In this comprehensive guide, we'll explore the best practices for scraping Amazon, tools to use, and the ethical considerations to keep in mind.

Web scraping involves extracting data from websites using automated tools or scripts. Scraping Amazon can help gather information on product prices, reviews, ratings, and more. However, due to Amazon's strict terms of service and robust anti-scraping measures, it's crucial to approach this task with the right strategies and tools.

Scraping Amazon can unlock valuable insights for market research, price monitoring, and competitive analysis. However, it's essential to approach this task with the right tools, strategies, and ethical considerations. By following best practices and respecting legal guidelines, you can effectively and responsibly gather data from Amazon to inform your business decisions.

Featured Posts

Popular Products

Residential Proxies

Allowlisted 200M+ IPs from real ISP. Managed/obtained proxies via dashboard.

Residential (Socks5) Proxies

Over 200 million real IPs in 190+ locations,

Unlimited Residential Proxies

Use stable, fast, and furious 700K+ datacenter IPs worldwide.

Rotating ISP Proxies

ABCProxy's Rotating ISP Proxies guarantee long session time.

Residential (Socks5) Proxies

Long-lasting dedicated proxy, non-rotating residential proxy

Dedicated Datacenter Proxies

Use stable, fast, and furious 700K+ datacenter IPs worldwide.

Web Unblocker

View content as a real user with the help of ABC proxy's dynamic fingerprinting technology.

Related articles

Master Torrent Privacy: Learn How to Safely Use a Proxy for Fast Downloads

Learn how to use a proxy for torrents to enhance your online privacy and security. Using a proxy can help you protect your identity while downloading files anonymously. Dive into our guide for expert tips and tricks!